The Teams Producing the Most Actionable Security Reporting Are Not the Ones Building the Most

Security Metrics & Reporting in the Age of AI

Introduction

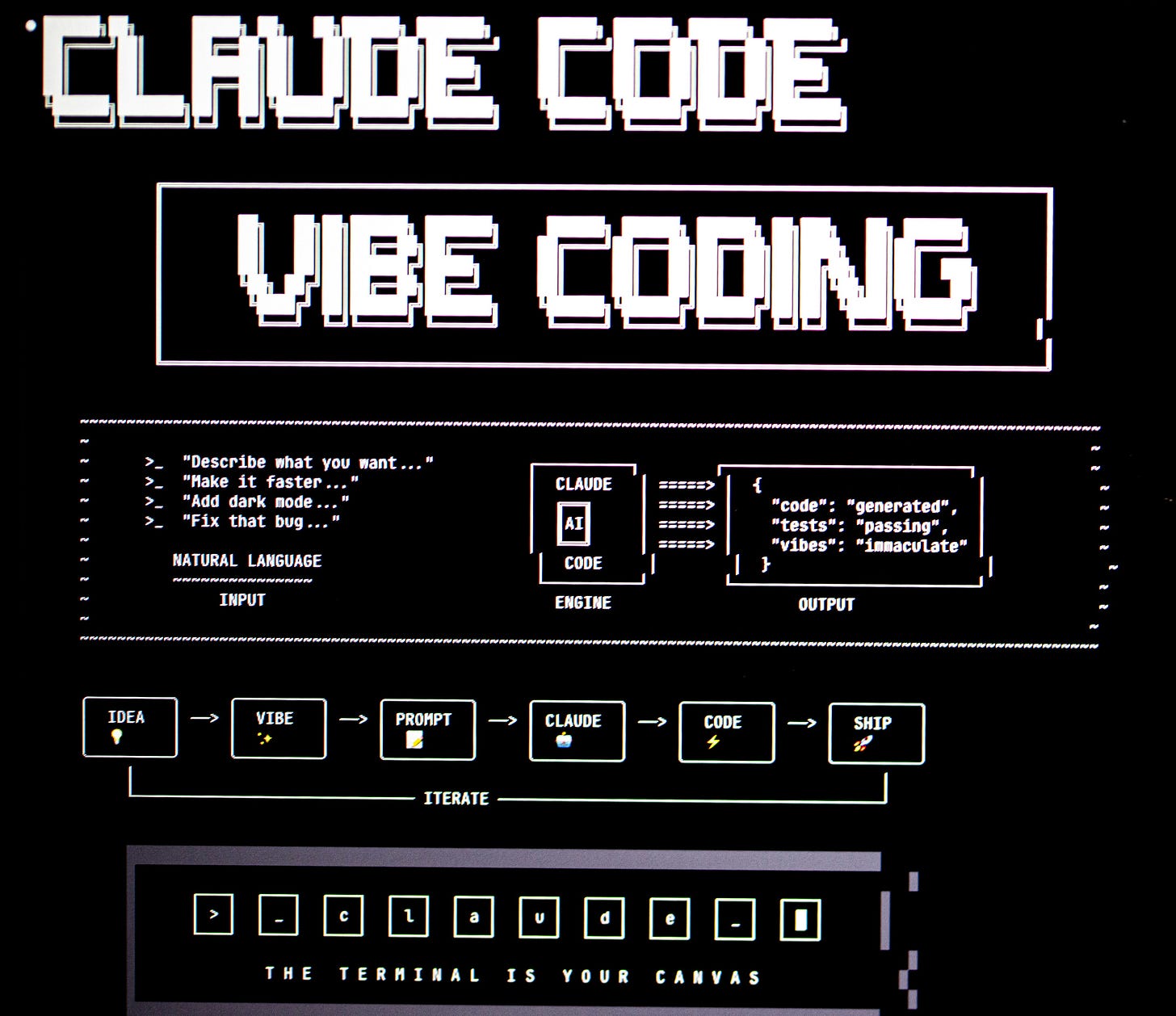

Behind all the AI hype and marketing, there is one use case that really works: AI coding. Tools like Claude Code and Codex are remarkable at quickly building working code.

The success of this use case has led to a proliferation of vibe-coded security tools, particularly dashboards and reports. This application makes total sense. Security teams have rarely had the luxury of being supported by their organization’s BI team, and they deal with vast amounts of disconnected data from siloed tools such as endpoint, identity, cloud, and network, each with its own data model and its own vendor portal.

To compound the problem, security vendors provide notoriously bad reporting. Not only are their native reporting features often ineffective, it’s common for practitioners to need the data presented in ways that the vendor simply doesn’t support. You end up exporting CSVs and doing manual work to answer questions.

So when AI coding arrived and made custom reporting suddenly accessible, security teams jumped at it. And they should have. But there’s a trap:

The teams producing the most actionable security reporting in the age of AI are not the ones building the most, but the ones with a framework for intentional exclusion.

AI Coding Has Made Reporting Cost Near-Zero

Before AI coding tools, security practitioners had three primary options for building custom reporting:

Programming languages like Python or R

Business intelligence platforms like Power BI or Tableau

Good old Excel

We all know where most teams ended up. And that’s to be expected. Security professionals are already expected to be experts across a domain that’s a mile wide. Expecting them to also be competent BI developers on top of that is unrealistic and frankly, a poor use of their expertise.

The practical reality was that adding any new report or dashboard feature came with a real cost. No matter which of the three paths you took, every addition required an investment of time and a reallocation of cycles away from other work. That cost wasn’t always explicit, but it was always there, and it acted as a natural filter. Teams couldn’t build everything, so they had to make choices about what mattered.

AI coding has dissolved that constraint. What previously took a security team weeks to stand up can now be done in hours by anyone who can write a clear prompt. The barrier to producing a working dashboard has dropped to near zero.

That sounds like an unambiguous win. It isn’t. Or at least, not automatically. Because the constraint that AI removed wasn’t just slowing you down. It was also forcing a discipline that most teams didn’t realize they were benefiting from.

Comprehensiveness Feels Like Quality. It Isn’t.

The instinct to add more to a dashboard feels more thorough. This perception is understandable, but it’s exactly backwards.

The producer and the consumer of a report have opposite relationships with completeness. For the person building the dashboard, including everything can feel comprehensive. But the consumer, the analyst, the executive, or the engineer making a decision, experiences that same comprehensiveness as friction. More inputs mean more cognitive work to find what matters and forces the viewer to do the synthesis that the report was supposed to do for them.

This is the completeness trap: the builder’s sense of thoroughness actively degrades the viewer’s ability to act.

It gets worse. Adding to a report dilutes the signal value of everything else surrounding it. When ten metrics are present, a viewer distributes their attention across all ten. The two that actually matter receive a fraction of the focus they would command if they were the only things on the screen. Every addition is a tax on what was already there.

An opinionated dashboard may look like it’s missing something. However, it actually reflects more useful analytical work. Someone made hard calls and understood the decisions being made well enough to know what to leave out. That judgment is invisible in the output, which is precisely what makes it easy to undervalue.

Conclusion

AI coding tools have given security teams a genuine capability. The ability to build custom, fit for purpose reporting without a BI team or a six week development cycle is a real advantage, and teams should use it.

But the same tools that make good reporting faster also make bad reporting faster. And in an environment where the cost of building has collapsed, the discipline of intentional exclusion is even more important.

In part two, we’ll get into the framework for how to make those exclusion decisions, and what it looks like to build a security reporting program around the metrics that actually drive decisions.